Learn to troubleshoot some of the most complex Oracle performance problems - real life case studies by Tanel Põder

Learn to troubleshoot some of the most complex Oracle performance problems - real life case studies by Tanel Põder Learn to troubleshoot some of the most complex Oracle performance problems - real life case studies by Tanel Põder

Learn to troubleshoot some of the most complex Oracle performance problems - real life case studies by Tanel Põder

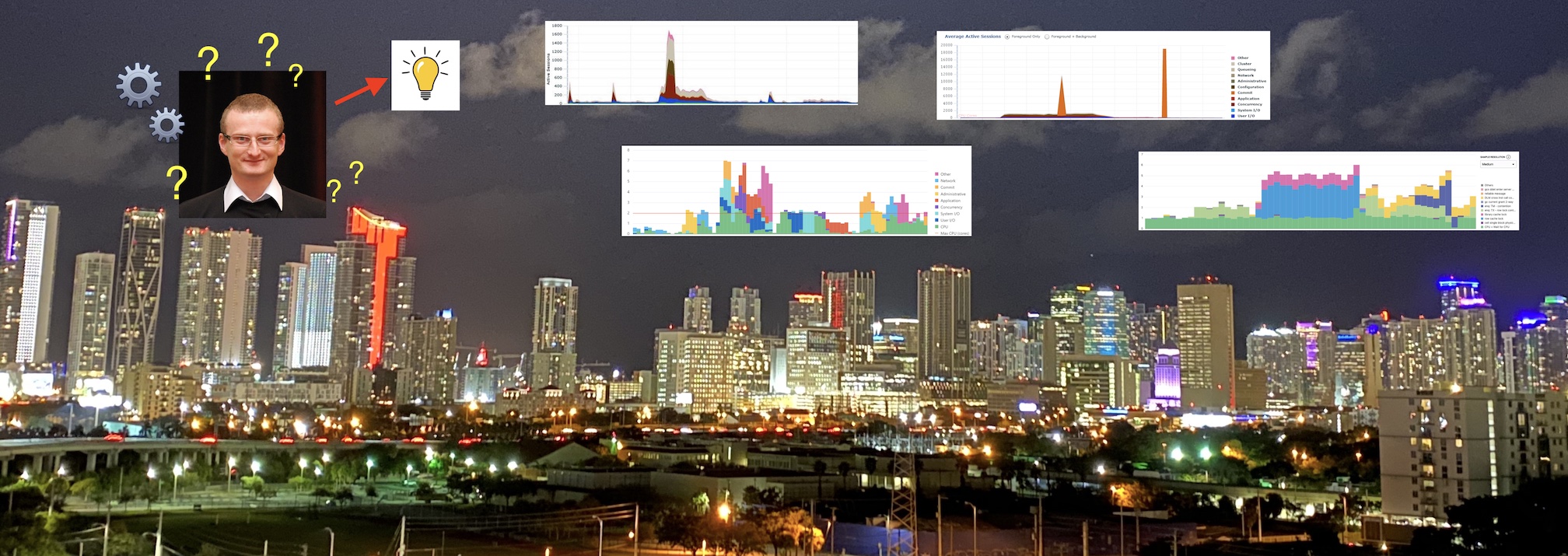

I’m running another performance event, this time about Troubleshooting Very Complex Oracle Performance Problems and all the talks are done by me! (I may have to wear different hats or something).

This conference is going to be different. It will be about some of the most complex Oracle performance problems I have troubleshooted in the last couple of years. All of these scenarios forced me to improve my own troubleshooting techniques and understanding - and often realize something fundamentally new, at new depths of complex Oracle database behavior.

I use the name “conference” instead of “seminar” as I intend to keep the conference spirit alive. We’ll have the Slack chat open for all attendees and I’ll invite some panelists to hang out via video too, to comment and ask me tough questions during the discussion sessions. Of course you can ask questions at any time too!

All four talks are designed to go to “beyond advanced” levels and you will learn completely new things about troubleshooting hard Oracle problems and complex systems in general. I’m confident in this because I learned completely new things while figuring out these problems too!

You should already know how to use ASH for activity drilldown, V$SESSTAT counters for gathering additional evidence and have read a stack trace or two. You hopefully have already used tools from my TPT toolset, like ashtop, snapper and latchprofx, because we’ll have to go beyond that!

If you have attended my Advanced Oracle Troubleshooting training class, you’ll be prepared. Otherwise, if you generally understand what I talk about in my blog entries (and Oracle hacking sessions), you’re good too! While I do teach the systematic troubleshooting approach in my AOT class, we don’t have time there to spend hours digging into a single problem scenario. The upcoming conference is exactly for such very focused very-deep-dives:

There’s more good news! Last year we ended up delivering the same event twice to give the attendees more flexibility in the pandemic uncertainty. I liked that format - even though we delivered pretty much the same presentations, it was possible to use more than one way to explain the same ideas and answer more questions.

So, I will run this event twice too, delivering the same presentations live, in April and September. The first run is most suitable for the US/EMEA timezones and the other for US/APAC. The attendees can attend both events for the price of one! Even if you don’t attend the April run live due to time difference, you can still replay the talk videos and ask questions in Slack after the event (and be ready to absorb even more info during the September run).

April run:

September run:

| Ticket type | Price Per Attendee | Sign up! |

|---|---|---|

| Early Bird | Expired | |

| Standard | Expired |

You may well know how to troubleshoot typical scenarios with symptoms listed below, but all these examples are about problems that turned out to be even more complex than the typical cases! Think of hitting small issues or bugs that later cause an “explosion”, multiple moving parts colliding and even Oracle/OS interactions with unlucky timing.

So, we will have to dive very deep. In the introduction of each talk, I will briefly explain the typical approaches for such problems that “normally work”. However, these issues are beyond normal, so we’ll spend most of the time on systematically diving even deeper into the unknown.

Naturally, I can’t share any customer code or data, so the problem scenarios are completely reproduced from scratch, using generic schemas and “application” code. I’ll run the examples in my high-performance lab environment, built for reproducing performance problems under heavy load. The seminar attendees will also get the source code I created, for reproducing these issues in their own sandboxes.

This will allow you to learn the “beyond advanced” Oracle troubleshooting mindset for drilling down into such complexity; and prepare you for quickly addressing even the wildest future problems!

April event, times in US Eastern time zone:

| Day 1 | Speaker | Topic |

|---|---|---|

| 11:00-12:30 | Tanel | Index block splits and “enq: TX - allocate ITL entry” contention spikes |

| 12:30-13:00 | Tanel | Break/Q&A/Chat |

| 13:00-14:30 | Tanel | A cursor sharing problem going way beyond the usual suspects |

| 14:30-15:00 | Discussion | Tanel + discussion with other performance geeks |

A Slack channel will be open for conference chat between the two days.

| Day 2 | Speaker | Topic |

|---|---|---|

| 11:00-12:30 | Tanel | Random cache buffers chains latch contention spikes |

| 12:30-13:00 | Tanel | Break/Q&A/Chat |

| 13:00-14:30 | Tanel | A cursor sharing problem going way beyond the usual suspects |

| 14:30-15:00 | Discussion | Discussion (more performance stuff!) |

| Tanel Põder |

Tanel Põder is a long-time computer performance geek, consultant and an entrepreneur. He is working mostly with enterprise data platforms like Oracle, Hadoop, Spark and the underlying operating systems. Tanel has built a number of performance troubleshooting tools for Linux and Oracle. He is a co-founder of Gluent, a company that builds data sharing solutions for enterprises. Tanel holds two patents in data virtualization space and is a co-author of the Expert Oracle Exadata book. He is currently researching machine learning, so he could replace himself with an AI bot and retire in 2030. Books: Expert Oracle Exadata; Tools: Snapper, pSnapper, 0x.Tools |

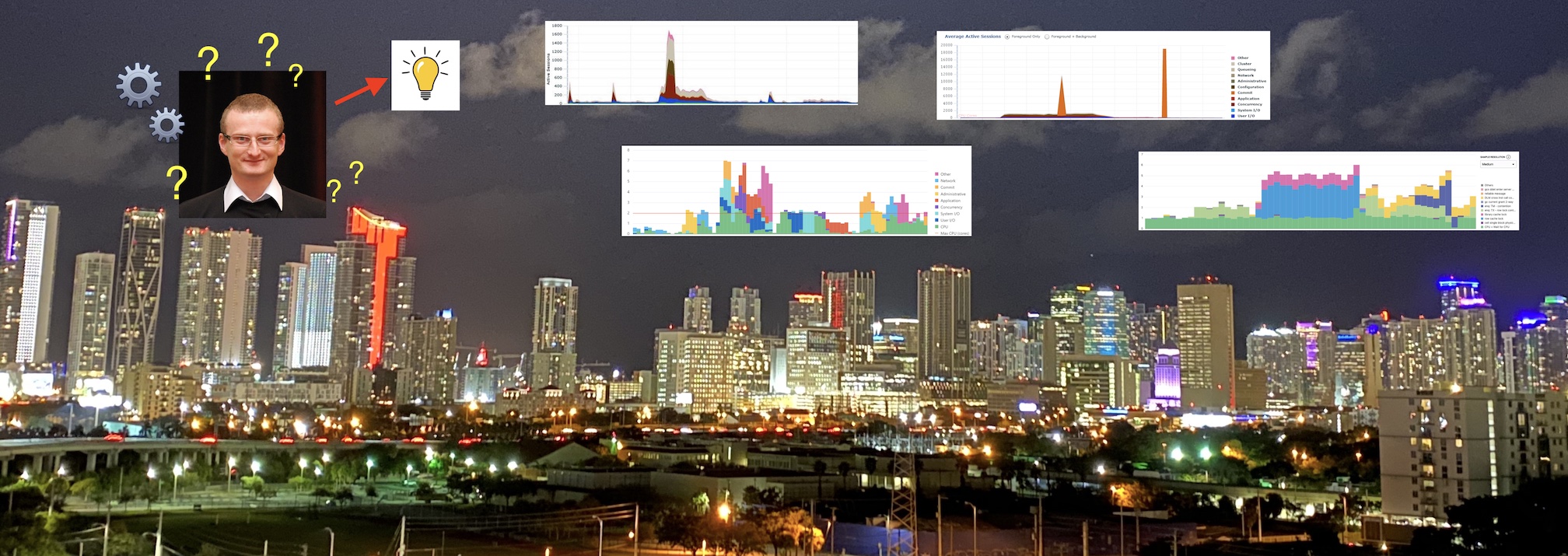

We’ll start from the “simpler” problem of having lots of “gc buffer busy waits” waits showing up on index blocks in a system that was doing lots of concurrent inserts. Fixing the buffer busy waits was relatively easy by changing the physical layout of some indexes on columns with monotonically increasing values. Out of the options available, we chose to hash-partition the problematic indexes to spread the contention.

However, after that bottleneck was removed, another wait event popped up: “enq: TX - allocate ITL entry” on index blocks and we started seeing multi-second “mini-hangs” despite using pretty small transactions. When drilling down deep into this issue with various experiments, custom test cases and block dumps, we discovered a little known limitation in how Oracle handles index block splits under heavy concurrency.

In this talk, Tanel will walk you through the systematic troubleshooting path to discovery of this internal limitation and the way of thinking for coming up with effective workarounds for such issues. I have uploaded a somewhat similar Oracle troubleshooting video walkthrough of another customer’s problem I helped to solve in past, but in this conference talk we will have to go even deeper than that!

The high level symptoms of this problem looked like “the usual”. Occasionally, Oracle decided to not reuse existing child cursors for some queries in a busy OLTP system, causing new child cursors to be parsed and loaded into the library cache. This caused exclusive mutex holds and various spikes of library cache mutex, cursor pin and shared pool latch contention waits, rendering the application unusable. You might think (like I did), that it’s just matter of running my nonshared.sql script or perhaps checking the detailed v$sql_shared_cursor.reason XML fields to quickly pinpoint the root cause, but no luck!

The cursor non-sharing reason was listed as BIND_MISMATCH (and not any of these usual suspects: BIND_EQUIV_FAILURE, HASH_MATCH_FAILED or BIND_LENGTH_UPGRADABLE). Furthermore, the problem showed up even after testing with disabled “fancy” features like adaptive cursor sharing, etc. Knowing that applications using varying bind buffer lengths can cause additional child cursor creation, we even tested using “fixed” static bind buffer sizes using the Oracle diagnostic event 10503 that is documented in My Oracle Support (ORA-10503: enable user-specified graduated bind lengths). Still no luck!

In this presentation, Tanel will first briefly go through the usual suspects and the typical troubleshooting techniques that were not good enough for solving this problem. Then he’ll show how to systematically dig much deeper into such cursor sharing problems. The end result was discovery of a new, rarely occurring bug that is still not fixed even in Oracle 19c.

The customer didn’t want to spend weeks or months trying to get some patch or an official solution as they needed to fix the problem now. Also, the vendor support was unable to reproduce the bug using exactly the same test case code as our issue. Thus, we had to come up with a creative workaround to avoid hitting the database bug that impacted the customer’s business.

You probably already know that the cache buffers chains latch gets can be used in shared mode in some cases. A buffer get done quickly without buffer pinning, in the cache buffer chain examination phase (shared latch get), has been possible since Oracle 9i. Back then it was used only in very special cases, like lookups from single-table hash cluster blocks known to have no hash collisions and later on unique lookups from index root blocks.

Years have gone by and in modern versions where Oracle is using optimized “fast pins”, the cache buffers chains latch gets are “mostly-shared” for many more types of logical IOs. For example, buffer gets done by index range scans and even full table scans can use shared gets on the “CBC” latches.

This is great for scalability, especially when thousands of sessions are accessing some datablocks concurrently and super-frequently (lookup tables, index root blocks, even the “latest” index leaf blocks on monotonically increasing primary key/timestamp columns). However, since these “mostly shared” latches still need to be taken in exclusive mode every now and then, you can hit “scalability hiccups” that may result in unexpected spikes of waits and latency!

In this talk, Tanel will first go over the traditional, well known techniques for high Oracle CPU usage and cache buffers chains latch contention troubleshooting, but will also show where they fall short when troubleshooting really complex scenarios. In this intensive drilldown, we will go well beyond the usual Oracle troubleshooting techniques where necessary, all the way to reading the latch holder info directly from SGA memory.

This talk is based on multiple customer scenarios that exhibited similar patterns, mainly that the “top level symptoms” point to a different direction from the ultimate root cause. Only after piercing the veil - digging multiple levels deeper - you find what really causes the spikes, performance hiccups and mini-hangs. Also, it’s not always the database that’s at fault! In big and busy enough systems, things can go wrong in other layers of the stack too. Operating system scheduler, memory allocation issues, network and application connection management, they all may cause issues for the database (not caused by the database).

In this talk, Tanel will walk you through troubleshooting and fixing a generally badly performing database, where a number of high level symptoms, like buffer busy waits and various enqueue lock contention events and even intermittently slow commits end up being caused by something completely different from the conventional Oracle troubleshooting wisdom. You will learn how to navigate scenarios with multiple moving parts that initially don’t make any sense, by systematically revealing the unknowns and getting closer to the root cause layer by layer, step by step.

To give you some idea of the depths we’ll go in - here’s a really old article (and presentation deck) of my past troubleshooting gig about Oracle CPU usage & cursor: pin S wait spikes. In the upcoming conference talk, we’ll be troubleshooting a completely new set of problems on a very busy OLTP database, using a recent Oracle DB version, on a powerful Linux machine with very fast storage and over 100 CPUs ;-)